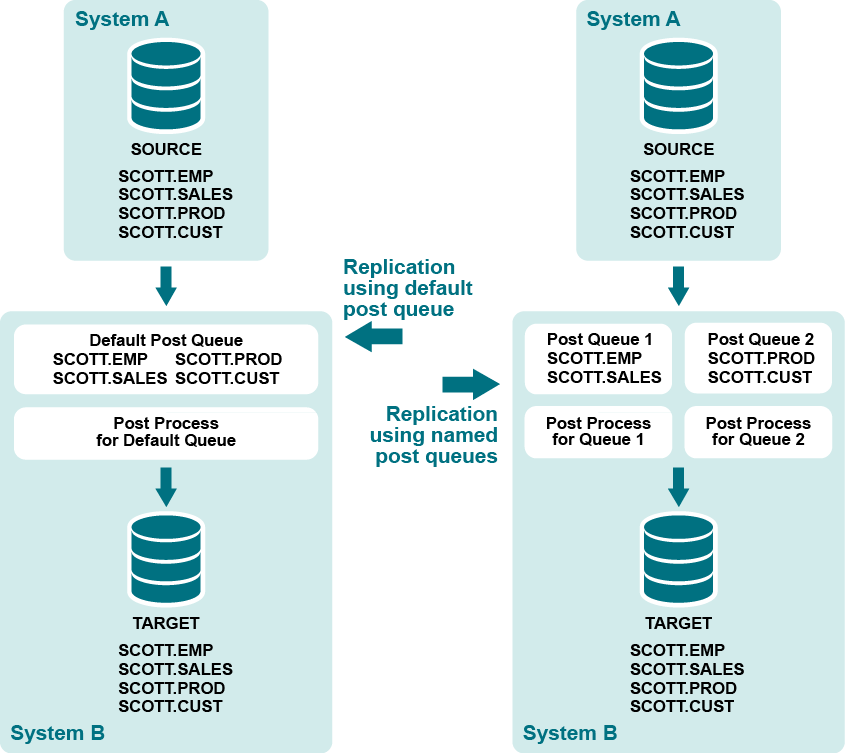

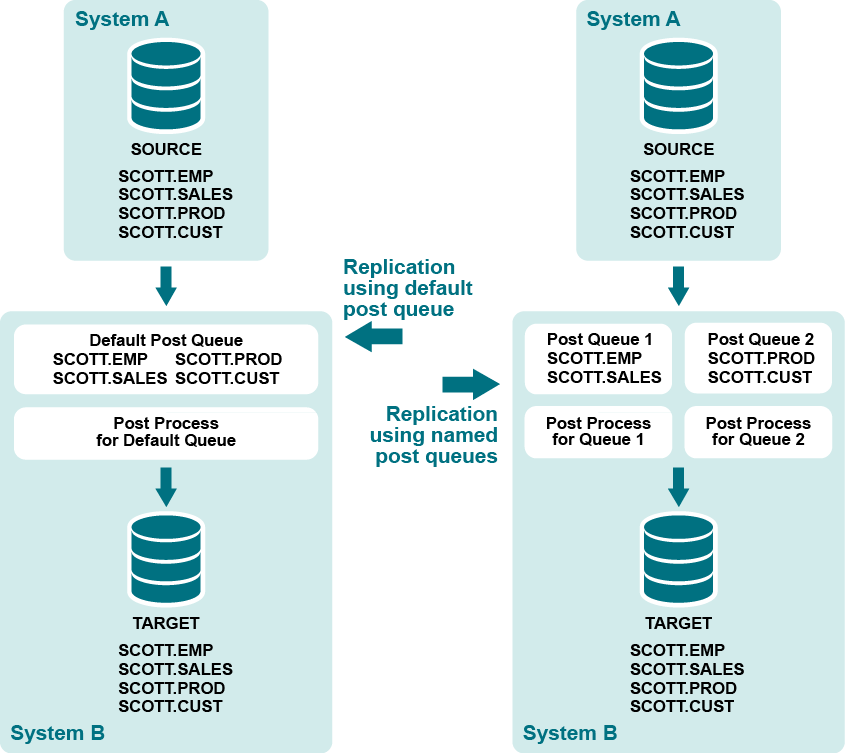

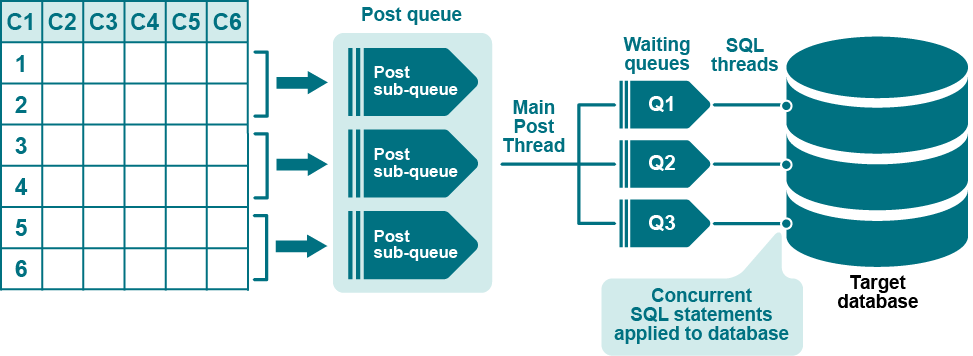

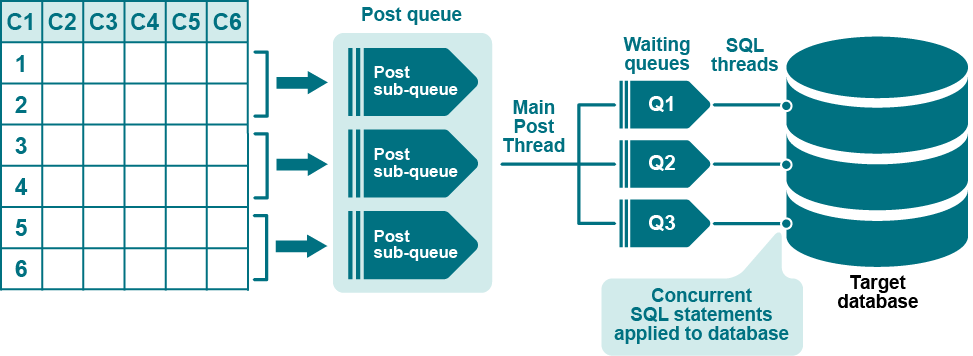

A named post queue is an optional component of the routing map in the configuration file. A named post queue is a user-defined post queue with its own Post process, which together operate in parallel to the default post queue and Post process. You can define one or more named post queue-process pairs to establish a set of parallel Post replication streams.

Supported sources and targets

Benefits of named post queues

You can use named post queues to isolate data from different tables into two or more separate Post streams. By using named post queues, you can improve posting performance by isolating objects such as the following that cause processing bottlenecks:

- large tables

- objects that have LOB columns. Named post queues are recommended for objects that contain LOBs.

- objects that involve large transactions.

- any objects whose operations you want to isolate.

Process the remaining objects through additional named post queues, or use the default post queue. Objects in the configuration file with a standard routing map (host@target) are replicated through a default post queue.

You can use horizontal partitioning to divide the rows of very large tables into separate named post queues as an added measure of parallelism.

You can set SharePlex parameters to different settings for each queue-process pair. This enables you to tune the performance of the Post processes based on the objects replicating through each one.

Considerations when using named post queues

Configure a named post queue: Oracle to all targets

If you are using named export queues, SharePlex creates a named post queue-process pair for each one by default. If you are not using named export queues, use the following syntax to define a named post queue in the configuration file by adding the :queue component to the routing map:

host:queue@target

Configuration with named post queue in routing map

| Datasource: o.SID |

| src_owner.table |

tgt_owner.table |

host:queue[@database_specification] |

| host |

The name of the target system. |

| queue |

The unique name of the post queue. Queue names are case-sensitive on all platforms. One word only. Underscores are permissible, for example:

sys2:post_q1@o.myora |

| database_specification |

One of the following for the datasource:

o.oracle_SID

One of the following if the target is a database:

-

o.oracle_SID

o.tns_alias

o.PDBname

r.database_name

c.oracle_SID |

Examples

The following configuration creates one post queue named Queue1 that routes data from table scott.emp and another post queue named Queue2 that routes data from table scott.cust.

| Datasource:o.oraA |

|

|

| scott.emp |

scott.emp |

sysB:Queue1@o.oraC |

| scott.cust |

scott.cust |

sysB:Queue2@o.oraC |

The following shows how a named post queue is specified when you are routing data in a pass-through configuration using an intermediary system. For more information, see Configure Replication to Share or Distribute Data.

| Datasource:o.oraA |

|

|

| scott.emp |

scott.emp |

sysB*sysC:Queue1@o.oraC |

Configure a named post queue for PostgreSQL

If you are using named export queues, SharePlex creates a named post queue-process pair for each one by default. If you are not using named export queues, use the following syntax to define a named post queue in the configuration file by adding the :queue component to the routing map:

host:queue@target

Supported targets

PostgreSQL, Oracle, SQL Server, and Kafka

Configuration with named post queue in routing map

| Datasource:r.dbname |

| src_schema.table |

tgt_schema.table |

host:queue[@database_specification] |

| host |

The name of the target system. |

| queue |

The unique name of the post queue. Queue names are case-sensitive on all platforms. One word only. Underscores are permissible, for example:

sys2:post_q1@r.dbname |

| database_specification |

r.database_name |

Note: Allow no spaces between any components in the syntax of the routing map.

Examples

The following configuration creates one post queue named Queue1 that routes data from table scott.emp and another post queue named Queue2 that routes data from table scott.cust.

| Datasource:r.dbname |

|

|

| scott.emp |

scott.emp |

sysB:Queue1@r.dbname |

| scott.cust |

scott.cust |

sysB:Queue2@r.dbname |

The following shows how a named post queue is specified when you are routing data in a pass-through configuration using an intermediary system.

| Datasource:r.dbname |

|

|

| scott.emp |

scott.emp |

sysB*sysC:Queue1@r.dbname |

How to identify a named post queue

A named post queue is identified by the datasource (source of the data) and one of the following:

- the name of an associated named export queue (if the Import is linked to a named export queue)

- the user-assigned post-queue name (if the Import is linked to a default export queue).

You can view named post queues through sp_ctrl:

- Use the qstatus command to view all queues on a system.

- Use the show command to view all Post processes with their queues.

See the SharePlex Reference Guide for more infomation about theses commands.

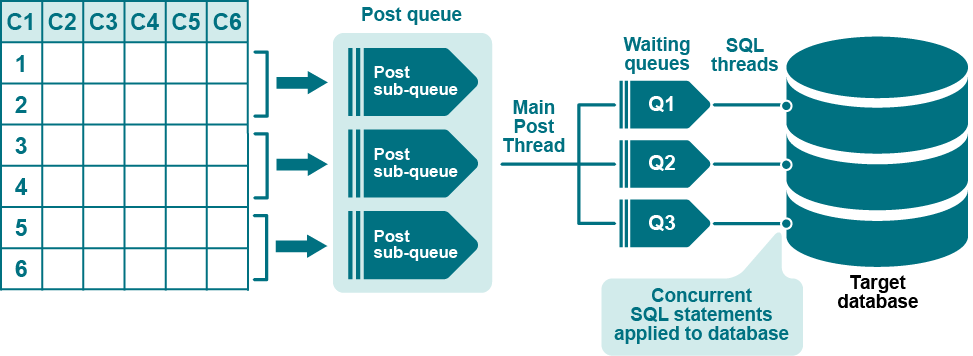

Use horizontally partitioned replication to divide the rows of a table into separate processing streams. You can use horizontally partitioned replication to:

- Replicate a subset of rows to a target, while retaining the rest of the rows in the source.

- Replicate different subsets of rows to different targets.

- Divide the replication of a source table into parallel Post queues for faster posting to the target table.

Supported sources and targets

-

PostgreSQL to PostgreSQL, Oracle, SQL Server, and Kafka

-

Oracle to all targets

-

PGDB as a Service to PGDB as a Service

-

PGDB as a Service to Oracle

-

PGDB as a Service to PostgreSQL

Overview of horizontally partitioned replication: Oracle to all targets

To configure horizontally partitioned replication for a table, the steps are:

-

Define row partitions and link them to a partition scheme.

- Specify the name of the partition scheme in the SharePlex configuration file to include the partitions in replication.

Partition types

The row partitions in a partition scheme can be based on one of the following:

- Columns: A column-based partition scheme contains row partitions defined by a column condition. A column condition is a WHERE clause that defines a subset of the rows in the table.

- Hash: A hash-based partition scheme contains row partitions defined by a hash value that directs SharePlex to distribute rows evenly across multiple queues.

About column-based partition schemes

You can use row partitions based on column conditions for the following purposes:

- Use a single row partition to replicate only a subset of the rows of a table. For example, you can replicate only those rows where the value of the YEAR column is greater than 2014. The partition scheme in this case could be named "Since2014" or "Recent."

-

Use multiple row partitions to divide the rows of a table so that each set of rows replicates to a different target. For example, a table named CORPORATE.SALES could have two row partitions named "East" and "West." The column conditions are defined accordingly, where the rows that satisfy REGION = EAST replicate to one location and the rows that satisfy REGION = WEST replicate to a different location. The partition scheme could be named "Sales_by_region."

-

Use multiple row partitions to divide the rows of a table into parallel processing streams (parallel Export-Import-Post streams) for faster posting to a target table. For example, you can improve the flow of replication to a heavily updated target table. The use of column conditions for this purpose is appropriate only if the table contains a column that enables you to split the processing evenly among parallel Post processes.

About hash-based partition schemes

You can use row partitions based on a hash value to divide the rows of a table into parallel processing streams (parallel Export-Import-Post) for faster posting to a target table. The advantage of using a hash value over column conditions to create partitions is that the rows are divided evenly and automatically by SharePlex, without the need to reference table columns in WHERE clauses. However, unlike column-based partition schemes, you cannot use the SharePlexcompare or repair commands for hash-based partition schemes.

Combine partitioned replication with full-table replication

You can combine horizontally partitioned and vertically partitioned replication for maximum control over how information is distributed.

For example:

- A corporate headquarters maintains a primary corporate database.

- Each of the corporation's four regional offices maintains its own database.

- Corporate headquarters uses vertically partitioned replication to share some column data of a table with the regional offices, but does not share any columns that contain sensitive data.

- The rows of the table are horizontally partitioned into four groups (East, West, North, South) for replication, so that each region receives only the record changes that apply to it.

Horizontally partitioned replication can be used in conjunction with full-table replication for the same table, for example to route groups of rows to different reporting systems and all rows to a backup system.

Limitations of use

Hash-based partitioning does not support the following:

- The compare and repair commands.

- Index-organized tables (IOT) and tables that contain LOBs or LONGs.

- Operations that delete or update a key value and then reinsert the same key value. This can cause unique constraint violations because of different rowids.

- Column-based partitioning on the same table.

Hash-based partitioning also does not support operations that cause rows to migrate into a different partition. Examples of such operations are:

- Update a value so that it moves to a new row partition

- Table reorganization

- Split a table partition or combine two partitions

- Export or import the table

- ALTER TABLE with the MOVE option

- ALTER TABLE with the SHRINK SPACE option

- FLASHBACK TABLE

- Redefine a table by using dbms_redefinition

- UPDATE to a non-partitioned table that changes row size so that the data does not fit into the current block

- DELETE of a row in a non-partitioned table and then re-insert.

Define partition schemes and row partitions

Use the add partition command to create row partitions and assign them to a partition scheme.

To partition rows based on column conditions

Issue add partition for each row partition that you want to create in a given partition scheme. When you create the first row partition, SharePlex creates the partition scheme as well.

sp_ctrl> add partition to scheme_name setcondition = column_condition and route = routing_map [and name = name] [and tablename =owner.table] [and description =description]

To partition rows based on a hash value:

Issue add partition once to specify the number of hash partitions to create.

sp_ctrl> add partition to scheme_name set hash = value and route = value

Add partition command syntax

Note: After you specify add partition with toscheme_name and the set keyword, all other components can be in any order.

| to scheme_name |

to is a required keyword indicating the row partition is being added to scheme_name.

scheme_name is the name of the partition scheme. The partition scheme is created by the first add partition command that you issue, which will also specify the first set of rows to partition.

If you are making heavy use of horizontal partitioning, it may help to establish naming conventions for your partition schemes. |

|

set |

Required keyword that starts the definition of the row partition. |

| condition = column_condition |

Creates a row partition based on a column condition. The condition must be in quotes. Use standard WHERE conditional syntax such as ((region_id = West) and region_id is not null). See How to create a valid column condition for additional information.

The condition and hash components are mutually exclusive. |

| hash = value |

Creates a row partition based on a hash value. The specified value determines the number of row partitions in the partition scheme.

The condition and hash components are mutually exclusive. |

| route = routing_map |

The route for this partition. This can be one of the following:

Partition based on a column condition:

Specify any standard SharePlex routing map, for example: sysB@o.myora or sysB:q1@o.myora or sysB@o.myora+sysC@o.myora (compound routing map).

If the target is JMS, Kafka, or a file, then the target should be specified as x.jms, x.kafka, or x.file, for example: sysA:hpq1@x.kafka.

To route a partition to multiple target tables that have different names, do the following:

- Issue a separate add partition command for each different target name. Use the tablename option to specify the name.

- In the configuration file, specify any of these target tables as the target table in the entry that uses this partition scheme. SharePlex will detect the other names when the configuration is activated.

- Set the SP_ORD_FIRST_FIND parameter to 0 so that SharePlex checks all of the column conditions in the partition scheme. By default SharePlex assumes that any given row change will satisfy only one column condition in the partition scheme. For more information, see the SharePlex Reference Guide.

Partition based on a hash:

Use the following format to direct SharePlex to create a named post queue for each partition:

host:basename|#{o.SID | r.database}

where:

- host is the name of the target system.

- basename is the base name that is assigned to all queues.

- |# directs SharePlex to number the queues sequentially by appending the base name with an integer, starting with 1 to the value set with hash.

- o.SID for an Oracle target or r.database for an Open Target target.

|

| name = name |

(Recommended) A short name for this partition. This option is only useful for partitions based on column conditions. A name eliminates the need to type out long column conditions in the event that you need to modify or drop the partition in the future. |

| tablename = owner.table |

(Optional) Use this option when there are multiple target tables and one or more have different names. Issue a separate add partition command for each name.

The table name must be fully qualified. If case-sensitive, the name must be specified in quotes.

Example:

add partition to scheme1 set name = p1 and condition = "C1 > 200" and route = sysb:p1@o.orasid and tablename = myschema.mytable |

| description = description |

(Optional) Description of this partition. |

Examples

Partitions based on a column condition

Route different sets of rows through different post queues:

sp_ctrl> add partition to scheme1 set name = q1 and condition = "C1 >= 200" and route = sysb:q1@o.orasid

sp_ctrl> add partition to scheme1 set name = q2 and condition = "C1 < 200" and route = sysb:q2@o.orasid

Route different sets of rows to different target systems and different table names from the source:

sp_ctrl> add partition to scheme1 set name = east and condition = "area = east" and route = sys1e@o.orasid and tablename = ora1.targ

sp_ctrl> add partition to scheme1 set name = west and condition = "area = west" and route = sys2w@o.orasid and tablename = ora2.targ

Partitions based on a hash

Divide rows into four partitions, each processing through a different post queue:

sp_ctrl> add partition to scheme1 set hash = 4 and route = sysb:hash|#@o.ora112

How to create a valid column condition

The following are guidelines for creating column conditions. These guidelines do not apply to row partitions that are created with a hash value.

Choose appropriate columns

The types of columns on which you base your column conditions vary per datasource:

Base column conditions on columns whose values will not change, such as PRIMARY or UNIQUE key columns. The objective is to avoid a partition shift, where changes made to the conditional columns of a partition can cause the underlying data to satisfy the conditions of a different (or no) partition.

Partition shift case 1: The column value is updated so that the new value no longer satisfies any column condition:

- SharePlex performs the operation, but future operations on that row are not replicated. The reason: the row no longer satisfies a column condition.

- The source and target tables of the original partition are now out of synchronization, but Post returns no errors.

Partition shift case 2: A row that satisfies one column condition gets updated to meet a different condition:

- Post cannot find a matching target row. The reason: the original change was not replicated because it did not meet the column condition.

- Post returns an out-of-sync error.

You can use the following method to repair the out-of-sync rows that are caused by changes to the values of column conditions:

- Use the compare command to repair the out-of-sync rows. For more information about this command, see the SharePlex Reference Guide.

Additionally, you can ensure that data is replicated properly by setting the following parameter on the source prior to activating the configuration file.

- Set the SP_ORD_HP_IN_SYNC parameter to a value of 1. When this parameter is enabled, if an UPDATE changes a column (conditional column) value resulting in a row that no longer satisfies the correct condition, SharePlex corrects the row. Enabling this parameter causes some performance degradation, depending on how many tables are configured for horizontally partitioned replication. See the SharePlex Reference Guide for more information and a list of conditions corrected by this parameter.

Note: If you are using a column other than a key to base the column condition on, and you notice reduced performance with horizontally partitioned replication enabled, add a log group for that column.

Use supported data types

SharePlex supports the following data types in column conditions:

- NUMBER

- DATE

- CHAR

- VARCHAR

- VARCHAR2

- LONG VARCHAR

Notes:

-

For the dates, SharePlex uses MMDDSYYYYHH24MISS. For example:

hiredate<‘1111 2011000000’

-

Horizontally partitioned replication does not support the following:

- data types other than the ones listed in this section. This also excludes large types like LOBs and object types such as VARRAYs and abstract data types.

- Oracle TO_DATE function

- UPDATEs or INSERTs on LONG columns larger than 100k

- Sequences

- TRUNCATEs of an Oracle partition

|

Use standard conditional syntax

The following list shows the conditional syntax that SharePlex supports in a column condition, where:

- value can be a string or a number. Enclose strings and dates within single quote marks (‘west’). Do not use quote marks for numbers .

- column is the name of a column in the table that you are configuring to use horizontally partitioned replication.

|

column = value

not (column = value)

column > value

value > column

column < value

column <= value

column >= value

column <> value

column != value

column like value

column between value1 and value2

not (column between value1 and value2 )

column is null

column is not null |

Conditions can be combined into nested expressions with parentheses and the AND, OR, and NOT logical connectives.

Example column conditions

not (col1 = 5)

(col2 = 5) and not (col3 = 6)

((col1 is not null) and (col2 = 5))

Additional guidelines

- NULLs are replicated by SharePlex in cases such as this one: not (department_id = 90). If department_id is NULL, it is replicated. To avoid replicating records with NULLs, include the column is not null syntax as part of the condition, for example: not (department_id = 90) and department_id is not null.

- If parentheses are not used to indicate operator precedence, SharePlex supports operator precedence in the same order that SQL does. For example, a condition not x and y behaves the same way as (not x) and y. A condition x and y or z behaves the same as (x and y) or z. When a condition includes parentheses, the explicit precedence is respected.

- If the condition column is a VARCHAR column and the values used to define the partitions are string literals, the entire condition must be enclosed in double quotes, as in the following example: add partition toschemeset route=routeand condition="C2 = 'Fred'"

- If the column name must be enclosed in quotes, then the entire condition must be enclosed in quotes, as in the following example: add partition toschemeset route=routeand condition="\"c2\" > 0"

-

Do not:

- include references to other tables in the column condition.

- exceed the 1024 bytes maximum defined storage.

- During the activation of a configuration that refers to partition schemes, SharePlex verifies the syntax in the column conditions of those schemes. If any syntax is incorrect, the activation fails. SharePlex prints an error to the Event Log that indicates where the error occurred.

Specify partition schemes in the configuration file

Use one configuration file for all of the data that you want to replicate from a given datasource, including tables that will have full-table replication and those that will use partitioned replication. For more information about how to create a configuration file, see Configure SharePlex to replicate data.To configure entries for horizontally partitioned replication, use the following syntax.

| Datasource:o.SID |

| src_owner.table |

tgt_owner.table |

!partition_scheme |

| ! |

|

routing_map |

|

o.database |

The datasource designation. Use the o. notation for an Oracle source. For database, specify the ORACLE_SID. |

| src_owner.table and tgt_owner.table |

The specifications for the source and target tables, respectively. |

| !partition_scheme |

The name of the partition scheme to use for the specified source and target tables. The ! is required. The name is case-sensitive. Compound routing of multiple partition schemes is not supported, for example !schemeA+schemeB.

Create a separate entry for each partition scheme that you want to use for the same source table. See Examples. |

| ! routing_map |

A placeholder routing map. It is required only if a route that you used in a partition scheme is not listed somewhere in the configuration file. SharePlex requires every route to be in the configuration file even if it is listed in a partition scheme.

Notes:

- This option is valid only for partitions based on a column condition

- If using named queues, list each queue route with this option

- If routing the partition scheme to different targets, list each one with this option. You can use a compound routing map if the names of all target tables are identical.

|

See Examples. |

Examples

To specify a partition scheme:

| Datasource: o.mydb |

| scott.emp |

scott.emp_2 |

!partition_emp |

To specify multiple partition schemes for the same source table:

| Datasource: o.mydb |

| scott.emp |

scott.emp_2 |

!partition_schemeA |

| scott.emp |

scott.emp_3 |

!partition_schemeB |

To specify a placeholder routing map:

| ! targsys1 |

| ! targsys2@o.ora2+targsys3@o.ora3 |

This placeholder is only required for partitions based on column conditions.

View the partitions and schemes

Use the view partitions command to view the row partitions in one partition scheme or all partition schemes in a horizontally partitioned replication configuration.

To view row partitions:

- Run sp_ctrl on the source system.

-

Issue the following command with either option, depending on whether you want to view all partitions or just those for a particular partition scheme.

sp_ctrl> view partitions for {scheme_name | all}

The following example shows both a hash-based partition scheme and a column-based partition scheme.

sp_ctrl> view partitions all

Scheme Name Route Hash Condition

----------- ------------- ------------------------------ ------ ---------------

HASH4 hash sys02:hash|#@o.ora112 4 ROWID

TEST_CT highvalues sys02:highvalues@o.ora112 sales>=10000

TEST_CT lowvalues sys02:lowvalues@o.ora112 sales<10000

Hash4 hash-based partition scheme

- The Scheme column shows a partition scheme named HASH4.

- The Name column shows that the name for the partition definition is hash.

- The Route column shows that the partitions are created automatically and that the target is o.ora112.

- The Hash column has a value of 4, which indicates that this is a hash-based partition scheme with four partitions.

- The Condition column shows that the type of hash algorithm that is being used is the default of rowid, rather than block.

TEST_CT column-based partition scheme

- The Scheme column shows a partition scheme named TEST_CT. There are two entries for this name, indicating that it contains two partitions.

- The Name column shows the name of each partition, which by default is the name of the post queue or the value set with the Name option of the add partition command.

- The Route column shows that the names of the post queues are based on the parition name and that the target is o.ora112.

- The Hash column is empty for a column-based partition scheme.

- The Condition column shows the column condition that creates the row partition.

To view partition post queues:

The qstatus command on the target shows the post queues that are associated with horizontally partitioned replication.

Queues for TEST_CT column-based partition scheme

sp_ctrl sys02> qstatus

Queues Statistics for sys02

Name: highvalues (o.ora11-o.ora112) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Name: lowvalues (o.ora11-o.ora112) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Queues for HASH4 hash-based partition scheme:

Queues Statistics for sys02

Name: hash1 (o.ora11-o.ora112) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Name: hash2 (o.ora11-o.ora112) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Name: hash3 (o.ora11-o.ora112) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Name: hash4 (o.ora11-o.ora112) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Make changes to partition schemes

The following commands or parameters are available to manage partition schemes. For more information, see the SharePlex Reference Guide.

|

Modify a partition |

modify partition command |

Modifies any of the attributes of a row partition definition. |

|

Remove a partition scheme |

drop partition scheme command |

Removes the partition scheme and all row partitions within it. |

|

Change hash algorithm |

SP_OCF_HASH_BY_BLOCK |

Change the hash algorithm from the default of rowid-based to block-based. Set to 1 to enable block-based algorithm. |

Overview of Horizontally Partitioned Replication for PostgeSQL and PostgreSQL Database as a Service

Supported targets

PostgreSQL, Oracle, SQL Server, and Kafka

NOTE: PostgreSQL to SQL server replication does not support the BOOLEAN, TIME, TIME WITH TIME ZONE, and BYTEA data types for horizontally partitioned data.

To configure horizontally partitioned replication for a table, the steps are:

-

Define row partitions and link them to a partition scheme.

- Specify the name of the partition scheme in the SharePlex configuration file to include the partitions in replication.

Partition type

- Columns: A column-based partition scheme contains row partitions defined by a column condition. A column condition is a WHERE clause that defines a subset of the rows in the table.

About column-based partition schemes

You can use row partitions based on column conditions for the following purposes:

- Use a single row partition to replicate only a subset of the rows of a table. For example, you can replicate only those rows where the value of the YEAR column is greater than 2014. The partition scheme in this case could be named "Since2014" or "Recent."

-

Use multiple row partitions to divide the rows of a table so that each set of rows replicates to a different target. For example, a table named CORPORATE.SALES could have two row partitions named "East" and "West." The column conditions are defined accordingly, where the rows that satisfy REGION = EAST replicate to one location and the rows that satisfy REGION = WEST replicate to a different location. The partition scheme could be named "Sales_by_region."

-

Use multiple row partitions to divide the rows of a table into parallel processing streams (parallel Export-Import-Post streams) for faster posting to a target table. For example, you can improve the flow of replication to a heavily updated target table. The use of column conditions for this purpose is appropriate only if the table contains a column (recommended to use primary or non-null unique key) that enables you to split the processing evenly among parallel Post processes.

Combine partitioned replication with full-table replication

You can combine horizontally partitioned and vertically partitioned replication for maximum control over how information is distributed.

For example:

- A corporate headquarters maintains a primary corporate database.

- Each of the corporation's four regional offices maintains its own database.

- Corporate headquarters uses vertically partitioned replication to share some column data of a table with the regional offices, but does not share any columns that contain sensitive data.

- The rows of the table are horizontally partitioned into four groups (East, West, North, South) for replication, so that each region receives only the record changes that apply to it.

Horizontally partitioned replication can be used in conjunction with full-table replication for the same table, for example to route groups of rows to different reporting systems and all rows to a backup system.

Define partition schemes and row partitions

Use the add partition command to create row partitions and assign them to a partition scheme.

To partition rows based on column conditions:

Issue add partition for each row partition that you want to create in a given partition scheme. When you create the first row partition, SharePlex creates the partition scheme as well.

sp_ctrl> add partition to scheme_name setcondition = column_condition and route = routing_map [and name = name] [and tablename =schema.table] [and description =description]

Add partition command syntax

Note: After you specify add partition with toscheme_name and the set keyword, all other components can be in any order.

| to scheme_name |

to is a required keyword indicating the row partition is being added to scheme_name.

scheme_name is the name of the partition scheme. The partition scheme is created by the first add partition command that you issue, which will also specify the first set of rows to partition.

If you are making heavy use of horizontal partitioning, it may help to establish naming conventions for your partition schemes. |

|

set |

Required keyword that starts the definition of the row partition. |

| condition = column_condition |

Creates a row partition based on a column condition. The condition must be in quotes. Use standard WHERE conditional syntax such as ((region_id = West) and region_id is not null). See How to create a column condition for additional information. |

| route = routing_map |

The route for this partition. This can be one of the following:

Partition based on a column condition:

Specify any standard SharePlex routing map, for example: sysB@r.dbname or sysB:q1@r.dbname or sysB@r.dbname+sysC@r.dbname (compound routing map).

To route a partition to multiple target tables that have different names, do the following:

- Issue a separate add partition command for each different target name. Use the tablename option to specify the name.

- In the configuration file, specify any of these target tables as the target table in the entry that uses this partition scheme. SharePlex will detect the other names when the configuration is activated.

- Set the SP_ORD_FIRST_FIND parameter to 0 so that SharePlex checks all of the column conditions in the partition scheme. By default SharePlex assumes that any given row change will satisfy only one column condition in the partition scheme. For more information, see the SharePlex Reference Guide.

|

| name = name |

(Recommended) A short name for this partition. This option is only useful for partitions based on column conditions. A name eliminates the need to type out long column conditions in the event that you need to modify or drop the partition in the future. |

| tablename = schemaname.table |

(Optional) Use this option when there are multiple target tables and one or more have different names. Issue a separate add partition command for each name.

The table name must be fully qualified. If case-sensitive, the name must be specified in quotes.

Example:

add partition to scheme1 set name = p1 and condition = "C1 > 200" and route = sysb:p1@r.dbname and tablename = myschema.mytable |

| description = description |

(Optional) Description of this partition. |

Examples

Partitions based on a column condition

Route different sets of rows through different post queues:

sp_ctrl> add partition to scheme1 set name = q1 and condition = "C1 >= 200" and route = sysb:q1@r.dbname

sp_ctrl> add partition to scheme1 set name = q2 and condition = "C1 < 200" and route = sysb:q2@r.dbname

Route different sets of rows to different target systems and different table names from the source:

sp_ctrl> add partition to scheme1 set name = east and condition = "area = east" and route = sys1e@r.dbname and tablename = schema1.targ

sp_ctrl> add partition to scheme1 set name = west and condition = "area = west" and route = sys2w@r.dbname and tablename = schema2.targ

How to create a valid column condition

The following are guidelines for creating column conditions.

Choose appropriate columns

The types of columns on which you base your column conditions vary per datasource:

Base column conditions on columns whose values will not change, such as PRIMARY or UNIQUE key columns. The objective is to avoid a partition shift, where changes made to the conditional columns of a partition can cause the underlying data to satisfy the conditions of a different (or no) partition.

Partition shift case 1: The column value is updated so that the new value no longer satisfies any column condition:

- SharePlex performs the operation, but future operations on that row are not replicated. The reason: the row no longer satisfies a column condition.

- The source and target tables of the original partition are now out of synchronization, but Post returns no errors.

Partition shift case 2: A row that satisfies one column condition gets updated to meet a different condition:

- Post cannot find a matching target row. The reason: the original change was not replicated because it did not meet the column condition.

- Post returns an out-of-sync error.

Additionally, you can ensure that data is replicated properly by setting the following parameter on the source prior to activating the configuration file.

- Set the SP_ORD_HP_IN_SYNC parameter to a value of 1. When this parameter is enabled, if an UPDATE changes a column (conditional column) value resulting in a row that no longer satisfies the correct condition, SharePlex corrects the row. Enabling this parameter causes some performance degradation, depending on how many tables are configured for horizontally partitioned replication. See the SharePlex Reference Guide for more information and a list of conditions corrected by this parameter.

Note: If you are using a column other than a key to base the column condition on, and you notice reduced performance with horizontally partitioned replication enabled, add a log group for that column. In PostgreSQL, you can set replica identity FULL to use this parameter

Use supported data types

SharePlex supports the following data types in column conditions:

Notes:

-

For the dates, SharePlex uses MMDDSYYYYHH24MISS. For example:

hiredate<‘1111 2011000000’

-

Horizontally partitioned replication does not support the following:

- Data types other than the ones listed in this section.

-

Large datatypes like TEXT, BYTEA, CHAR > 2000 length, VARCHAR > 4000 length, VARCHAR without length will not be supported in column condition.

- UPDATEs or INSERTs on LONG columns larger than 100k (length of data to be updated or insertsin the column)

|

Use standard conditional syntax

The following list shows the conditional syntax that SharePlex supports in a column condition, where:

- value can be a string or a number. Enclose strings and dates within single quote marks (‘west’). Do not use quote marks for numbers .

- column is the name of a column in the table that you are configuring to use horizontally partitioned replication.

|

column = value

not (column = value)

column > value

value > column

column < value

column <= value

column >= value

column <> value

column != value

column like value

column between value1 and value2

not (column between value1 and value2 )

column is null

column is not null |

Conditions can be combined into nested expressions with parentheses and the AND, OR, and NOT logical connectives.

Example column conditions

not (col1 = 5)

(col2 = 5) and not (col3 = 6)

((col1 is not null) and (col2 = 5))

Additional guidelines

- NULLs are replicated by SharePlex in cases such as this one: not (department_id = 90). If department_id is NULL, it is replicated. To avoid replicating records with NULLs, include the column is not null syntax as part of the condition, for example: not (department_id = 90) and department_id is not null.

- If parentheses are not used to indicate operator precedence, SharePlex supports operator precedence in the same order that SQL does. For example, a condition not x and y behaves the same way as (not x) and y. A condition x and y or z behaves the same as (x and y) or z. When a condition includes parentheses, the explicit precedence is respected.

- If the condition column is a VARCHAR column and the values used to define the partitions are string literals, the entire condition must be enclosed in double quotes, as in the following example: add partition toschemeset route=routeand condition="C2 = 'Fred'"

- If the column name must be enclosed in quotes, then the entire condition must be enclosed in quotes, as in the following example: add partition toschemeset route=routeand condition="\"c2\" > 0"

-

Do not:

- include references to other tables in the column condition.

- exceed the 1024 bytes maximum defined storage.

- During the activation of a configuration that refers to partition schemes, SharePlex verifies the syntax in the column conditions of those schemes. If any syntax is incorrect, the activation fails. SharePlex prints an error to the Event Log that indicates where the error occurred.

Specify partition schemes in the configuration file

Use one configuration file for all of the data that you want to replicate from a given datasource, including tables that will have full-table replication and those that will use partitioned replication. For more information about how to create a configuration file, see Configure SharePlex to Replicate Data . To configure entries for horizontally partitioned replication, use the following syntax.

| Datasource:r.dbname |

| srcschemaname.table |

targetschemaname.table |

!partition_scheme |

| ! |

|

routing_map |

|

r.dbname |

The datasource designation. Use the r. notation for an PostgreSQL source. |

| src_schema.table and tgt_schema.table |

The specifications for the source and target tables, respectively. |

| !partition_scheme |

The name of the partition scheme to use for the specified source and target tables. The ! is required. The name is case-sensitive. Compound routing of multiple partition schemes is not supported, for example !schemeA+schemeB.

Create a separate entry for each partition scheme that you want to use for the same source table. See Examples. |

| ! routing_map |

A placeholder routing map. It is required only if a route that you used in a partition scheme is not listed somewhere in the configuration file. SharePlex requires every route to be in the configuration file even if it is listed in a partition scheme.

Notes:

- This option is valid only for partitions based on a column condition.

- If using named queues, list each queue route with this option

- If routing the partition scheme to different targets, list each one with this option. You can use a compound routing map if the names of all target tables are identical.

| |

Examples

To specify a partition scheme:

| Datasource: r.mydb |

| scott.emp |

scott.emp_2 |

!partition_emp |

To specify multiple partition schemes for the same source table:

| Datasource: r.mydb |

| scott.emp |

scott.emp_2 |

!partition_schemeA |

| scott.emp |

scott.emp_3 |

!partition_schemeB |

To specify a placeholder routing map:

| ! targsys1 |

| ! targsys2@r.dbname2+targsys3@r.dbname3 |

This placeholder is only required for partitions based on column conditions.

View the partitions and schemes

Use the view partitions command to view the row partitions in one partition scheme or all partition schemes in a horizontally partitioned replication configuration.

To view row partitions:

- Run sp_ctrl on the source system.

-

Issue the following command with either option, depending on whether you want to view all partitions or just those for a particular partition scheme.

sp_ctrl>view partitions for {scheme_name | all}

The following example shows a column-based partition scheme:

sp_ctrl> view partitions all

| product |

lessQuantity |

10.250.40.27@r.testdb |

splex.prod_1 |

id between 1 and 100 |

| product |

moreQuantity |

10.250.40.27@r.testdb |

splex.prod_2 |

id between 101 and 200 |

| product |

largeQuantity |

10.250.40.27@r.testdb |

splex.prod_3 |

id between 201 and 300 |

| sales_by_region |

east |

10.250.40.27@r.testdb |

splex.sales_dst1 |

((region = 'East') and region is not null) |

| sales_by_region |

west |

10.250.40.27@r.testdb |

splex.sales_dst2 |

((region = 'west') and region is not null) |

| city_scheme |

Pune |

10.250.40.27:pune_queue@r.testdb |

splex.student_target1 |

((stud_name = 'Pune') and stud_name is not null) |

| city_scheme |

Mumbai |

10.250.40.24:mumbai_queue@r.testdb |

splex.student_target2 |

((stud_name = 'Mumbai') and stud_name is not null) |

city_scheme column-based partition scheme

- The Scheme column shows a partition scheme named city_scheme. There are two entries for this name, indicating that it contains two partitions.

- The Name column shows the name of each partition, which by default is the name of the post queue or the value set with the Name option of the add partition command.

- The Route column shows that the names of the post queues are based on the parition name and that the target is r.testdb.

- The Condition column shows the column condition that creates the row partition.

To view partition post queues:

The qstatus command on the target shows the post queues that are associated with horizontally partitioned replication.

Queues for city_scheme column-based partition scheme

sp_ctrl (pslinuxpgsp11:2200)> qstatus

Queues Statistics for pslinuxpgsp11

Name: pune_queue (r.testdb-r.testdb) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)sp_ctrl (pslinuxpgsp08:2200)> qstatus

Queues Statistics for pslinuxpgsp08

Name: mumbai_queue (r.testdb-r.testdb) (Post queue)

Number of messages: 0 (Age 0 min; Size 1 mb)

Backlog (messages): 0 (Age 0 min)

Make changes to partition schemes

The following commands or parameters are available to manage partition schemes. For more information, see the SharePlex Reference Guide.

|

Modify a partition |

modify partition command |

Modifies any of the attributes of a row partition definition. |

|

Remove a partition scheme |

drop partition scheme command |

Removes the partition scheme and all row partitions within it. |

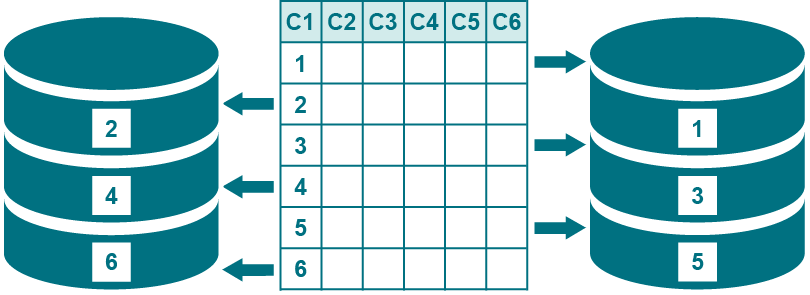

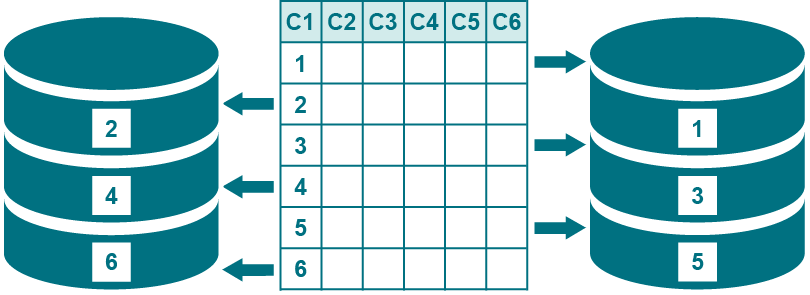

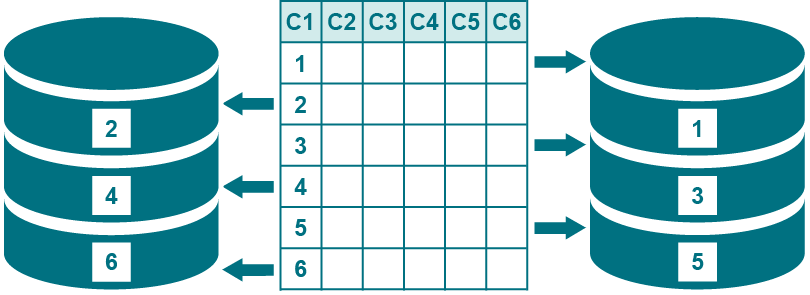

Use vertically partitioned replication to replicate a subset of the columns of a table. For example, you can replicate data changes made to C1, C2, C3, and C4, but not changes made to C5 and C6, as shown in the diagram.

Supported sources and targets

-

PostgreSQL to PostgreSQL, Oracle, SQL Server, and Kafka

-

Oracle to all targets

-

PGDB as a Service to PGDB as a Service

-

PGDB as a Service to Oracle

-

PGDB as a Service to PostgreSQL

Guidelines for using vertically partitioned replication

Follow these guidelines when creating a configuration file that includes vertically partitioned replication:

-

Vertically partitioned replication is appropriate for reporting and other data sharing strategies, but it is not appropriate for high availability environments. Once you configure a table for vertically partitioned replication, SharePlex does not recognize the other columns, so data in those columns is not replicated.

-

You can combine horizontally partitioned and vertically partitioned replication for maximum control over which information is distributed, and to where.

For example: A company has a headquarters and regional divisions. The headquarters maintains the corporate database, and each region maintains a regional database. The headquarters uses vertically partitioned replication to share some of the column data of a table to those locations, while retaining other sensitive data at headquarters. Row changes made to the shared columns are further partitioned horizontally, for replication to the appropriate regional database.

- A table cannot be configured to replicate some columns to one target system and all columns to another (combination of vertically partitioned replication and full-table replication). You can, however, configure full-table replication to an identical table on one target, and then configure vertically partitioned replication from that target to a second target that contains the table that requires only the partition columns.

- A target table can, but does not have to, contain all of the same columns as its source table. The target can contain just the columns being replicated from the source table. The names of corresponding source and target columns do not need to be the same. Corresponding columns must contain the same data types (same type, size, precision).

Overview of vertically partitioned replication: Oracle to all targets

To configure vertically partitioned replication, you specify either a column partition or an exclusion column partition in the configuration file:

- A column partition specifies the columns that you want to include in replication. Only data changes that are made to the specified columns get sent to the target.

- An exclusion column partition specifies columns to be excluded from replication. No data from those columns is replicated to the target.

Follow these rules to specify either type of column partition:

- There can be one partition per source table. A column partition and an exclusion partition are mutually exclusive.

- A column list must be enclosed within parentheses.

- Separate each column name with a comma. A space after the comma is optional.

- The maximum length of a partition is 174820 bytes (the maximum line length allowed in a configuration file). Therefore, the actual number of columns that you can list depends on the length of each name.

- The columns can be contiguous or non-contiguous in the source table. For example, you can replicate the first, third and seventh columns of a table.

- Key columns are not required to be included in the partition.

-

- If using horizontally partitioned and vertically partitioned replication together for this table, all of the columns in the partition scheme must be part of the column condition.

- Use one configuration file for all of the data that you want to replicate from a given datasource, including tables that will have full-table replication and those that will use partitioned replication.

To configure entries for vertically partitioned replication, use the following syntax. For more information about how to create a configuration file, see Configure SharePlex to replicate data.

| datasource_specification |

|

|

|

# table specification with column partition |

|

src_owner.table (src_col,src_col,...) |

tgt_owner.table [(tgt_col,tgt_col,...)] |

routing_map |

|

# table specification with exclusion column partition |

| src_owner.table !(src_col,src_col,...) |

tgt_owner.table |

routing_map |

| src_owner.table and tgt_owner.table |

The specifications for the source and target tables, respectively. |

|

(src_col, src_col,...) |

Specifies a column partition that lists the columns to include in replication. No other column data is replicated, including data in columns that are added after the start of replication (assuming DDL replication is enabled). |

|

!(src_col,src_col,...) |

Specifies an exclusion column partition that lists the columns to exclude from replication. All other column data is replicated, including data in columns that are added after the start of replication (assuming DDL replication is enabled).

Note: When using an exclusion column partition, the corresponding source and target column names must be identical, and the excluded columns cannot be used in a key definition. For more information, see Define a Unique Key . |

| (tgt_col,tgt_col,...) |

The target columns. Use this option to map source columns to target columns that have different owners or names. If the source and target columns have identical owners or names, the target columns can be omitted.

To map source columns to target columns, follow these rules:

- List the target columns in the same logical order as their corresponding source columns. This is required regardless of the actual order of the target columns in the table, so that SharePlex builds the correct correlations in the object cache. For example, a change to the second column in the source list is replicated to the second column in the target list.

|

| routing_map |

The routing map for the column partition. The routing map can be one of the following:

Important! A compound routing map must be used, rather than listing multiple targets in separate entries, because only one column condition per source table can be listed in the configuration file. To use a compound routing map, the owners and names of all of the target tables must be identical. For more information, see Routing Specifications in a Configuration File. |

Configuration examples

The following is a vertically partitioned replication configuration replicating to multiple targets by using a compound routing map. To use a compound routing map for this source table, all targets must be named scott.sal.

| Datasourceo.oraA |

|

|

| scott.emp (c1,c2) |

scott.sal |

sysB@o.oraB+sysC@o.oraC |

The following is a vertically partitioned replication configuration replicating to a single target where the target columns have different names from those of the source.

| Datasourceo.oraA |

|

|

| scott.emp (c1,c2) |

scott.sal (c5,c6) |

sysB@o.oraB |

The following configuration file is not valid because it repeats the same column partition of scott.emp (c1, c2) twice in the configuration file.

| Datasourceo.oraA |

|

|

| scott.emp (c1,c2) |

scott.cust (c1,c2) |

sysB@o.oraB |

| scott.emp (c1,c2) |

scott.sales (c1,c2) |

sysC@o.oraC |

Overview of vertically partitioned replication for PostgreSQL and PostgreSQL Database as a Service

Supported targets

PostgreSQL, Oracle, SQL Server, and Kafka

NOTE: PostgreSQL to SQL server replication does not support the BOOLEAN, TIME, TIME WITH TIME ZONE, and BYTEA data types for vertically partitioned data.

To configure vertically partitioned replication:

To configure vertically partitioned replication, you specify either a column partition or an exclusion column partition in the configuration file:

- A column partition specifies the columns that you want to include in replication. Only data changes that are made to the specified columns get sent to the target.

- An exclusion column partition specifies columns to be excluded from replication. No data from those columns is replicated to the target.

Follow these rules to specify either type of column partition:

- There can be one partition per source table. A column partition and an exclusion partition are mutually exclusive.

- A column list must be enclosed within parentheses.

- Separate each column name with a comma. A space after the comma is optional.

- The maximum length of a partition is 174820 bytes (the maximum line length allowed in a configuration file). Therefore, the actual number of columns that you can list depends on the length of each name.

- The columns can be contiguous or non-contiguous in the source table. For example, you can replicate the first, third and seventh columns of a table.

- Key columns are not required to be included in the partition. There can be a performance impact if you are not partitioning key columns.

- Use one configuration file for all of the data that you want to replicate from a given datasource, including tables that will have full-table replication and those that will use partitioned replication.

To configure entries for vertically partitioned replication, use the following syntax:

| datasource_specification |

|

|

|

# table specification with column partition |

|

src_schema.table (src_col,src_col,...) |

tgt_schema.table [(tgt_col,tgt_col,...)] |

routing_map |

|

# table specification with exclusion column partition |

| src_schema.table !(src_col,src_col,...) |

tgt_schema.table |

routing_map |

| src_schema.table and tgt_schema.table |

The specifications for the source and target tables, respectively. |

| (tgt_col,tgt_col,...) |

The target columns. Use this option to map source columns to target columns that have different schemas or names. If the source and target columns have identical schemas or names, the target columns can be omitted.

To map source columns to target columns, follow these rules:

- List the target columns in the same logical order as their corresponding source columns. This is required regardless of the actual order of the target columns in the table, so that SharePlex builds the correct correlations in the object cache. For example, a change to the second column in the source list is replicated to the second column in the target list.

|

| routing_map |

The routing map for the column partition. The routing map can be one of the following:

Important! A compound routing map must be used, rather than listing multiple targets in separate entries, because only one column condition per source table can be listed in the configuration file. To use a compound routing map, the schemas and names of all of the target tables must be identical. |

Configuration examples

The following is a vertically partitioned replication configuration replicating to multiple targets by using a compound routing map. To use a compound routing map for this source table, all targets must be named scott.sal.

| Datasource: r.dbname |

|

|

| scott.emp (c1,c2) |

scott.sal |

sysB@r.dbname1 + sysC@r.dbname2 |

The following is a vertically partitioned replication configuration replicating to a single target where the target columns have different names from those of the source.

| Datasource: r.dbname |

|

|

| scott.emp (c1,c2) |

scott.sal (c5,c6) |

sysB@r.dbname1 |

The following configuration file is not valid because it repeats the same column partition of scott.emp (c1, c2) twice in the configuration file.

| Datasource: r.dbname |

|

|

| scott.emp (c1,c2) |

scott.cust (c1,c2) |

sysB@r.dbname1 |

| scott.emp (c1,c2) |

scott.cust (c1,c2) |

sysC@r.dbname2 |