-

Titel

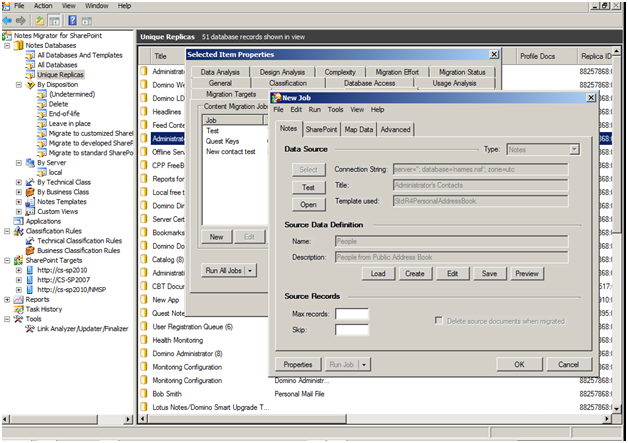

Restricting the job amount by using Max and Skip settings. -

Beschreibung

Performance concerns creates an interest in restricting job amounts:

Is there any way to split a data migratiion job of a very huge NSF file into multiple chunks.

We have a particular database that is very huge and want to run this job in sets of 200 in production to avoid any environmental/ performance issues.

-

Ursache

Performance concerns

-

Lösung

The Max Records option allows you to limit the number of Notes records to be migrated to a predetermined number. The Skip option allows you to skip a number of Notes records before starting migration. When used in conjunction with the "Max Records" option, you can migrate data in distinct chunks.

Example: If you have 30000 records...and there are performance concerns, so you are going to do the jobs in batches of 5000, then the first "max" would be 5000, and the "skip" would be left blank. But the second job (new job) would be "max" 5000, "skip" 5000. The third job would be "max" 5000, skip 10000...ect. all the way to 30000 or whichever the end result amount is.